If you are running UCS hardware with ESXi then you should be using custom ENIC/FNIC drivers as specified on the Hardware and Software Interoperability Matrix.…

UCS Blades Power Off Unexpectedly

I was called into an interesting issue over the past week. I was told that a chassis worth of UCS blades had powered off without…

WARNING: CpuSched: XXXX: processor apparently halted for XXXX ms

While I have seen people discuss this error message and solution, I figured it would be a good idea to discuss in terms of specific…

Cisco UCS B230 M2 Caveats

The latest half-width Cisco UCS B-series servers are beautiful pieces of machinery. In such a small footprint it is possible to get two processors each…

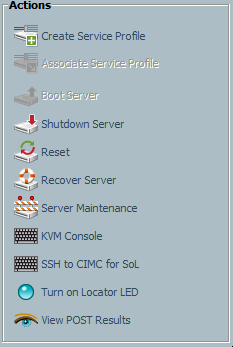

UCSM KVM Console Displays Black Screen

Over the past several weeks, I have been standing up new hardware in order to expand a cloud infrastructure. During this process, I was tasked…