Any issue has been discovered where upgraded instances of vR Ops from 6.0.x to 6.1 that were integrated with Log Insight no longer display Log…

Heads Up! Log Insight Fails to Start with: Cannot Connect

I have heard of a few people who have restarted the Log Insight 2.5 virtual appliance and found that the Log Insight service failed to…

Heads Up! Log Insight 2.0 and Upgraded vCenter 4.x Issue

I just wanted to make people aware of a new VMware Knowledge Base (KB) article that has been published regarding Log Insight 2.0.

BUG ALERT: UCS FNIC Driver 1.5.0.8 + ESXi 5.x = PSODs

If you are running UCS hardware with ESXi then you should be using custom ENIC/FNIC drivers as specified on the Hardware and Software Interoperability Matrix.…

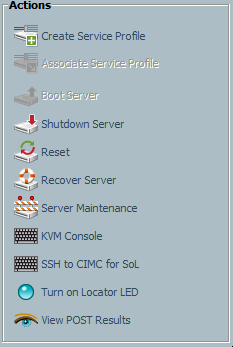

UCS Blades Power Off Unexpectedly

I was called into an interesting issue over the past week. I was told that a chassis worth of UCS blades had powered off without…

Cisco Bug: Show Commands Cause (Dual) Fabric Reboot(s)

Over the last two weeks I have been hit by the same UCS bug, though by different means, twice and as such I would like…

WARNING: CpuSched: XXXX: processor apparently halted for XXXX ms

While I have seen people discuss this error message and solution, I figured it would be a good idea to discuss in terms of specific…

Bug in PowerCLI 4.1.1: Set-VIRole

I was trying to set up some permissions on vCenter Server using PowerCLI. Here is an example of a command I was running: foreach ($role…

Show VLAN

If you are a network administrator, then you probably know that on many switches typing the command ‘show run’ will display the running switch configuration…