Recently, I talked about Log Insight cluster data imbalance and why you want to avoid it. In this post, I would like to discuss when you might choose to create data imbalance on purpose.

WARNING: The reasons listed in this post are not officially supported. Proceed at your own risk.

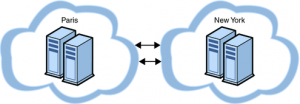

Distributed cluster

What is it?

A distributed cluster is where nodes are deployed in different locations.

Example use cases?

The simplest example would the case where a user has multiple data centers and want to deploy nodes in each data center while managing/querying from a single location (i.e. the master). Another possible example would be in secure environments where collectors are necessary in a DMZ or a separate network.

Non use case

One common example use case I hear is the concern about sending syslog traffic over a WAN due to bandwidth concerns. While I can understand where this concern comes from, I would like to disprove it and discourage using a distributed cluster for this reason alone. The thought process is based on the amount of data collected over day. For example, I have heard many people state that they collect, “many GBs of logs a day.” I have seen environments where over a TB of data is collected a day. Sounds like a lot right? Sounds like a potential network bandwidth issue right? Well, let’s break down the numbers.

Let’s assume you are collecting 7,500 events per second (the maximum a single Log Insight node supports today). The average packet size of an event is around 175 bytes (for vSphere). This means that 7500 EPS * 175 bytes = 0.01Gb/s (7500 EPS * 175 bytes * 60 seconds in a minute * 60 minutes in an hour * 24 hours in a day = ~112.5GB of traffic a day). In a cluster environment, we are talking 0.01Gb/s * 6 = 0.06Gb/s (112.5 * 6 = 675.6GB of traffic a day). I hope your WAN can keep up with that!

Federation

It is also important to note that a distributed cluster is different from a federated cluster. With federation, a cluster would be deployed per <something> (e.g. data center) and a central master would be created that managed/queried other masters deployed. With federation, not only do you have local data collection, you also have local data query.

How it works

A typical cluster is created, but node(s) will be distributed in different locations. For the cluster to function properly, specific network ports need to be opened to allow for proper cluster communication. In addition, separate external load balancer VIPs need to be created for the different locations where node(s) are deployed to ensure traffic stays local as desired (or a global load balancer VIP can be created depending on the requirements).

Different data retentions

What is it?

The ability to store logs for a particular subset of events longer/shorter than another particular subset of events.

Example use case

Security logs typically need to be kept longer than infrastructure logs. In the case of infrastructure logs, they are typically used for troubleshooting and RCA. In most environments, keeping infrastructure logs for longer than two weeks does not make sense because they are unlikely to be queried after two weeks (trending is different from troubleshooting and RCA). Security logs on the other hand are necessary for longer periods of time for a variety of different reasons including compliance. In addition, security logs are typically less verbose than infrastructure logs (of course there are exceptions – IDS/IPS) so without different data retention periods security logs would get pushed out because of infrastructure logs.

How it works

A typical cluster is created but node(s) are dedicated to a subset of data. To properly handle this, different VIPs are created on the external load balancer and devices are pointed to the appropriate VIP as desired.

Summary

There may be a case where you will want to create a data imbalance on purpose. When doing so, be sure to consider the implications of such a decision when architecting.

© 2014 – 2021, Steve Flanders. All rights reserved.